Cities today generate an immense amount of visual information. Satellite imagery, street-level photographs, drone surveys, and construction documentation continuously capture the built environment in ways that were previously impossible. For architects and urban practitioners, this creates both an opportunity and a challenge: while the data is abundant, interpreting it manually is time-consuming, subjective, and difficult to scale.

As computational methods increasingly influence design and analysis workflows, there is growing interest in tools that can assist in understanding spatial information automatically. One such technique is image segmentation a computer vision process that enables machines to interpret visual scenes by identifying and separating meaningful components within an image.

This allows architects, planners, and computational designers to move beyond viewing images as references and begin using them as analytical inputs.

What Image Segmentation Does?

At its core, image segmentation divides an image into regions representing different objects or spatial classes. Each pixel is assigned to a category such as buildings, vegetation, roads, or sky, depending on the context and model used. This transforms raw visual data into a structured layer of information that can be measured, compared, or integrated into analytical workflows.

Different approaches exist. Semantic segmentation classifies pixels according to type, while instance segmentation distinguishes individual objects within the same class.

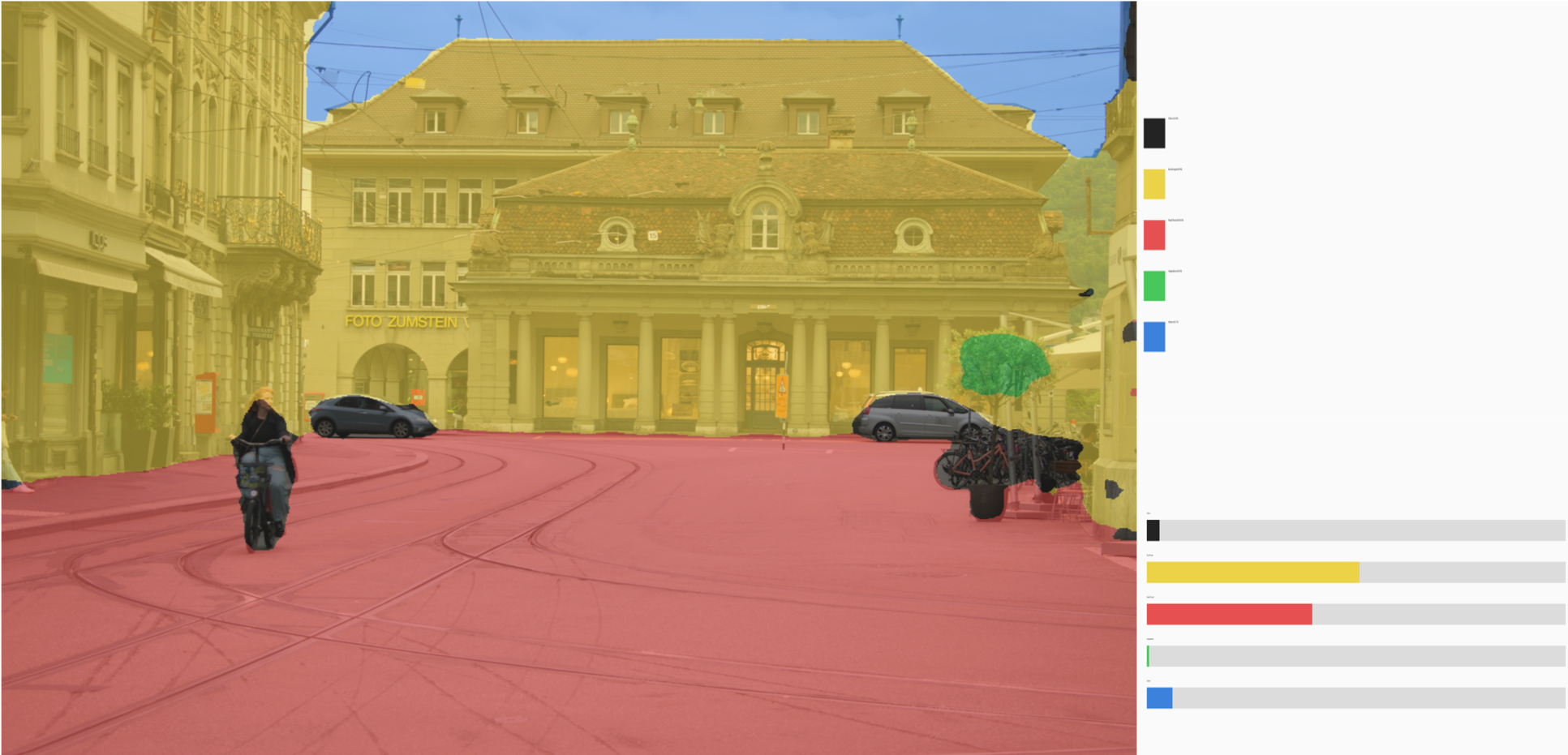

The segmented output demonstrates how pixel-level classification reorganizes visual scenes into structured spatial layers. Distinct regions representing built form, vegetation, circulation surfaces, and human presence illustrate the transition from raw imagery to categorized data.

Relevance to Urban context

At an urban scale, segmentation can support various analytical tasks that traditionally require extensive manual mapping or GIS pre-processing. Automatically identifying buildings, roads, open areas, and vegetation allows for rapid interpretation of land-use patterns and spatial density. This can assist in preliminary site studies, urban morphology assessments, or environmental evaluations.

Beyond mapping, segmented outputs can serve as inputs for computational simulations, visualization overlays, or spatial metrics generation, extending their relevance beyond simple classification.

The above Segmentation reveals the dominance of built surface coverage and highlights limited vegetated distribution, demonstrating how automated classification supports rapid urban morphology interpretation. Automated segmentation converts visual urban complexity into measurable spatial data within seconds eliminating manual GIS pre-classification.

Relevance to Architectural Workflows

On an architectural scale, segmentation opens possibilities for interpreting building-level information. Facade elements, materials, openings, or structural features can be detected and categorized, allowing visual documentation to contribute directly to analytical workflows. This can support tasks such as condition assessment, contextual evaluation, or material mapping.

Although these processes are still evolving within everyday practice, they illustrate how image-driven perception may increasingly complement traditional documentation methods.

The above segmentation highlights the extraction of facade regions, ground planes, and contextual elements, demonstrating how automated visual classification can support building-level interpretation.

Integration into Computational Pipelines

Segmentation becomes useful when the output stops being just an image and starts becoming usable data. Once parts of a scene are separated buildings, roads, vegetation, facade regions those regions can be extracted as masks or spatial layers and fed into other workflows.

For example, segmented building footprints from aerial imagery can be vectorized and used as base geometry for parametric studies. Road networks identified from imagery can inform circulation graphs or accessibility analysis. At a smaller scale, facade segmentation can guide material mapping or contextual modelling without manually tracing references

Limitations

Despite its capabilities, image segmentation is not flawless. Accuracy depends heavily on image quality, environmental conditions, and the context in which models were trained. Shadows, occlusions, or unfamiliar urban patterns can lead to incorrect classifications.

For this reason, segmentation should be treated as an assistive analytical layer rather than a definitive reading of space. Interpretation and validation remain essential architectural responsibilities.

Conclusion

Image segmentation enhances urban and architectural analysis by converting imagery into readable spatial layers. This makes it easier to detect patterns, extract context, and begin analytical workflows without extensive manual tracing. While interpretation remains human-led, segmentation speeds up how visual information becomes usable for design reasoning.

References

· Long, J., Shelhamer, E., & Darrell, T. (2015). Fully Convolutional Networks for Semantic Segmentation. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR).

· He, K., Gkioxari, G., Dollár, P., & Girshick, R. (2017). Mask R-CNN. Proceedings of the IEEE International Conference on Computer Vision (ICCV).

· Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep Learning. MIT Press.

· Minaee, S., Boykov, Y., Porikli, F., Plaza, A., Kehtarnavaz, N., & Terzopoulos, D. (2021). Image Segmentation Using Deep Learning: A Survey. IEEE Transactions on Pattern Analysis and Machine Intelligence.